Training Feedback Survey: Free Post-Training Evaluation with AI

8 targeted questions evaluating training relevance, delivery quality, practical applicability, pace, materials, and interaction. Send immediately after the session. AI generates an instant report comparing results across trainers and sessions so you know exactly what to improve.

Use This Template FreeThe gold standard for training evaluation with 4 levels: Level 1 (Reaction, did participants like it?), Level 2 (Learning, did they learn?), Level 3 (Behavior, do they apply it?), Level 4 (Results, business impact). This survey covers Level 1 and elements of Level 2.

Measuring return on investment for learning programs, from participant satisfaction (Level 1) through behavior change to business impact. Companies with structured training feedback see 25-300% ROI on their L&D investment.

The degree to which learned skills are actually applied in daily work after training. Research shows skills not applied within 30 days are unlikely to be applied at all, making post-training feedback critical for identifying transfer barriers early.

I've analyzed the results and summarized the key themes — with actionable recommendations for your team.

What Is a Post-Training Feedback Survey?

A post-training feedback survey, also called training evaluation form, workshop feedback form, or course evaluation, measures how participants experienced a learning session. Based on Kirkpatrick Level 1 (Reaction), it captures immediate satisfaction, perceived relevance, delivery quality, and intent to apply what was learned.

ATD research shows that only 35% of organizations systematically evaluate training effectiveness, meaning most companies invest in learning and development without knowing what actually works. Our template uses 8 targeted questions covering all key dimensions, and AI generates instant reports that compare results across sessions, trainers, and topics. The result: every training gets better because feedback drives continuous improvement instead of sitting in a spreadsheet.

Start using this template for freeWhy Collect Post-Training Feedback?

Continuous Training Improvement

Each feedback round makes the next session better. Iterate on content, delivery, pace, and materials based on real participant data, not assumptions. Move beyond the 'Happy Sheet' to actionable insights.

Training programs with systematic feedback loops improve effectiveness by 40% (ATD).

AI Cross-Session Analysis

AI compares feedback across sessions, trainers, and topics to identify what consistently works and what doesn't, revealing patterns invisible in individual evaluations. Benchmark each training against your organization average.

Cross-session analysis reveals trainer-specific and content-specific improvement patterns invisible in single evaluations.

Quick, Non-Intrusive & Timely

8 questions, 5 minutes. Send immediately after the training session or workshop while impressions are fresh. Feedback collected within 24 hours is significantly more accurate and detailed than delayed surveys.

Feedback collected within 24 hours is 50% more accurate than delayed post-training surveys.

Post-Training Survey Questions: All 8 Dimensions

8 LIKERT-scale questions, one per training effectiveness dimension. Covering relevance, delivery quality, practical applicability, pace, materials, interaction, expectation fulfillment, and recommendation. Based on Kirkpatrick Level 1 evaluation best practices.

Content & Relevance

The training content was relevant to my work.

Relevance is the #1 predictor of whether training content gets applied on the job. If participants don't see the connection to their work, knowledge transfer fails regardless of delivery quality.

I learned practical skills I can apply immediately.

Practical applicability separates effective training from wasted time. This question bridges Kirkpatrick Level 1 (Reaction) and Level 2 (Learning), measuring perceived readiness to apply.

The training materials were clear and well-organized.

Material quality affects both learning during the session and reference value afterward. Well-organized materials serve as job aids that extend the training's impact beyond the classroom.

Delivery & Interaction

The trainer communicated the content effectively.

Delivery quality is trainer-specific. Tracking this across sessions reveals which trainers excel and where coaching might help. The most actionable dimension for trainer development.

The pace of the training was appropriate.

Pace problems are the #1 complaint in training evaluations: too fast loses beginners, too slow bores experienced participants. A low score here often means the audience was poorly segmented.

There was enough opportunity for interaction and questions.

Interaction opportunities predict learning retention. Passive lecture formats score high on content but low on interaction. This gap reveals whether the format needs more exercises, discussions, or Q&A.

Outcome & Recommendation

The training met my expectations.

Expectation fulfillment measures marketing-to-delivery alignment. Low scores here often indicate a description or title mismatch rather than poor content, an easy fix with high impact.

I would recommend this training to colleagues.

The training NPS: recommendation intent is the strongest single predictor of actual impact. High relevance + low recommendation signals that content was good but execution needs work.

How to Analyze Training Evaluation Results

Send Within 24 Hours

Share the post-training survey link immediately after the session, while impressions are fresh. Delayed feedback loses accuracy as memory fades and recall becomes biased.

Review Dimension Gaps

High relevance but low applicability? The content is right but exercises need work. High delivery but low materials? The trainer is great but needs better handouts. AI highlights these patterns.

Compare Across Sessions & Trainers

AI tracks training evaluation trends across multiple sessions to show improvement over time, and benchmarks each session against your organization average. Reveals trainer-specific strengths and development areas.

Iterate & Improve

Use the lowest-scoring dimensions to guide specific improvements for the next session. Close the loop: share with participants what changed based on their feedback, as this increases future response rates.

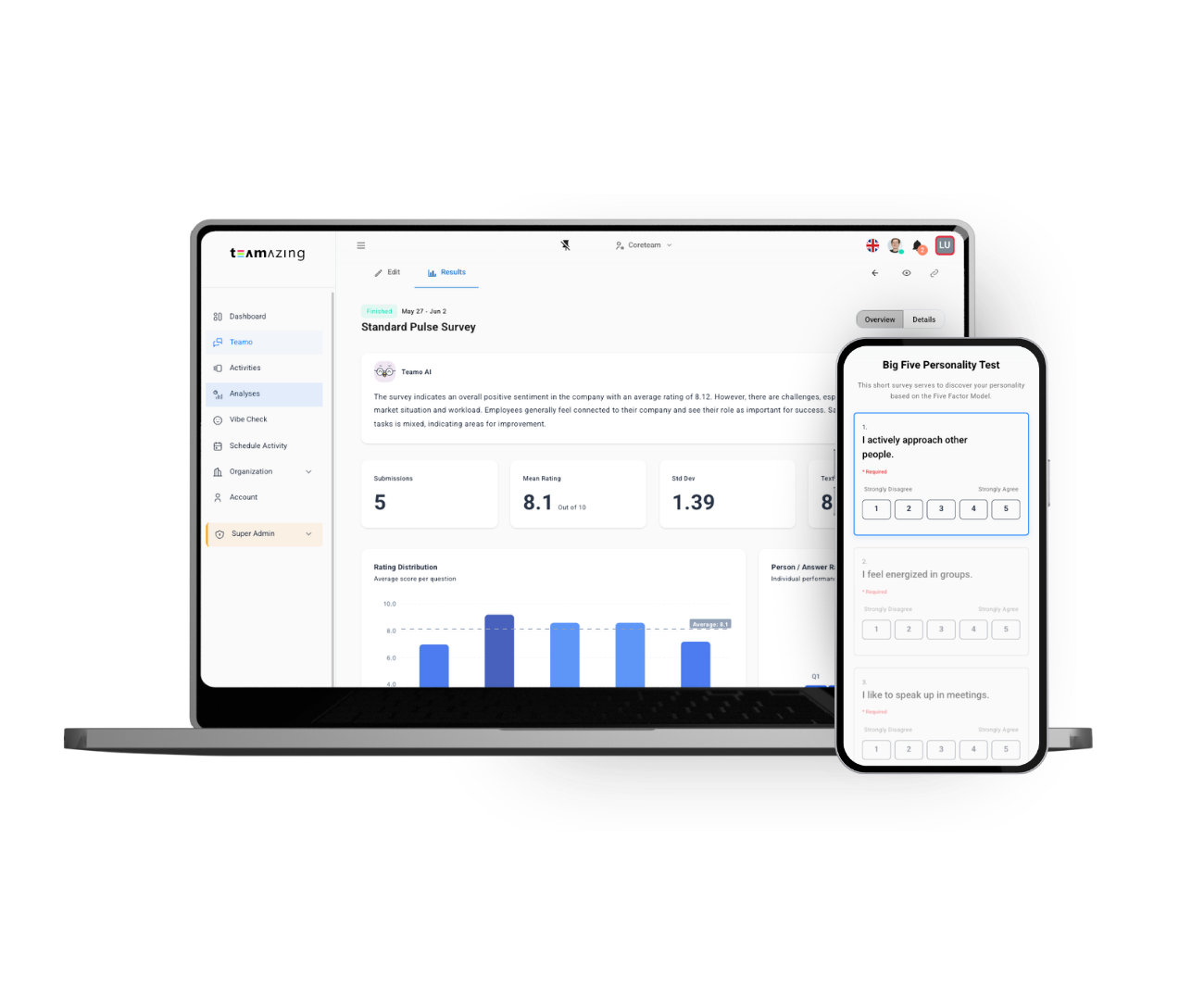

Free templates, survey tools & results

Your free access to templates, survey tools, and results with ways to export anywhere.

Get Started Free

Teamo AI benchmarks each training session against your organization average and flags statistically significant deviations, so you see instantly whether this workshop or seminar was above or below par on each dimension. Over time, AI identifies which training formats, topics, and trainers consistently deliver the best results.

How It Works

Choose Template

Select the Training Feedback Survey template. Send to participants immediately after the training session, workshop, or seminar.

5-Minute Post-Training Feedback

8 quick LIKERT-scale questions covering all training effectiveness dimensions. Optional: non-anonymous for individual follow-up with participants.

Get AI Training Report

Instant AI analysis with dimension scores, comparison to previous sessions and trainers, and specific improvement suggestions for your next training.

Training Feedback Survey: Frequently Asked Questions

Ready to get started?

Create your Training Feedback Survey in minutes — free with AI-powered analysis.

Use This Template Free